As humanoid robots transition from science fiction to real-world applications—working on BMW assembly lines, entering hospitals, and moving toward mass production by companies like Tesla—a critical ethical question is emerging: Are we inadvertently programmed to assign race to machines?

Recent research suggests that as robots become more human-like, they are not immune to the same social hierarchies and prejudices that define human society. Even more concerning is that many people appear unable to recognize these biases when they make them.

The Hidden Patterns of Choice

A study published in March 2026 by researchers Jiangen He, Wanqi Zhang, and Jessica K. Barfield reveals a striking disconnect between how people choose robots and how they justify those choices.

When presented with various workplace scenarios (such as a hospital, a construction site, or a school) and asked to pick a robot from a lineup of different skin tones, participants’ choices mirrored long-standing human stereotypes:

– Manual labor roles were frequently assigned to robots with darker skin tones.

– Professional and academic roles were often assigned to robots with lighter skin tones.

– Athletic roles showed a preference for skin tones associated with Black identity.

The “Practicality” Defense

What makes this finding particularly complex is the language of justification. When asked why they chose a specific color, participants rarely cited race. Instead, they used “neutral” or functional reasoning:

* They argued white robots looked “cleaner” for healthcare.

* They claimed dark-skinned robots were better for construction because they “showed less dirt.”

This suggests a phenomenon where people use logic to mask underlying social biases, making the prejudice invisible to both the chooser and the observer.

The Psychology of Mirroring and Competence

The researchers also uncovered a profound difference in how different racial groups interact with robotic “skin.” In a concept known as racial mirroring, people often feel a psychological connection to entities that look like them. However, this did not manifest uniformly:

“The lack of affective mirroring from Black participants may reflect historical realities where darker skin has been systematically stripped of ‘warmth’ in cultural narratives, forcing a heavier reliance on ‘competence.’” — He, Zhang, and Barfield

While white and Asian participants often chose robots based on how the color made them feel (emotional resonance), Black participants tended to choose dark-skinned robots based on their perceived utility or strength (functional reasoning). This suggests that systemic social history deeply influences how even non-human entities are perceived.

A Divided Scientific Landscape

The academic community is far from reaching a consensus on whether robots truly possess “race.” The debate falls into three main camps:

- The Bias Proponents: Researchers like Christoph Bartneck have used “shooter bias” paradigms to show that people react to dark-skinned robots with the same split-second prejudices they show toward Black humans in digital simulations.

- The Skeptics: Other scholars, such as Jaime Banks and Kevin Koban, argue that people largely view robots as “non-human agents,” seeing them as tools rather than racialized beings.

- The Contextualists: Anthropologists like Lionel Obadia argue that these findings may be a product of American-centric frameworks and might not apply to how robots are perceived in different global cultures.

The Design Dilemma: From Sci-Fi to Reality

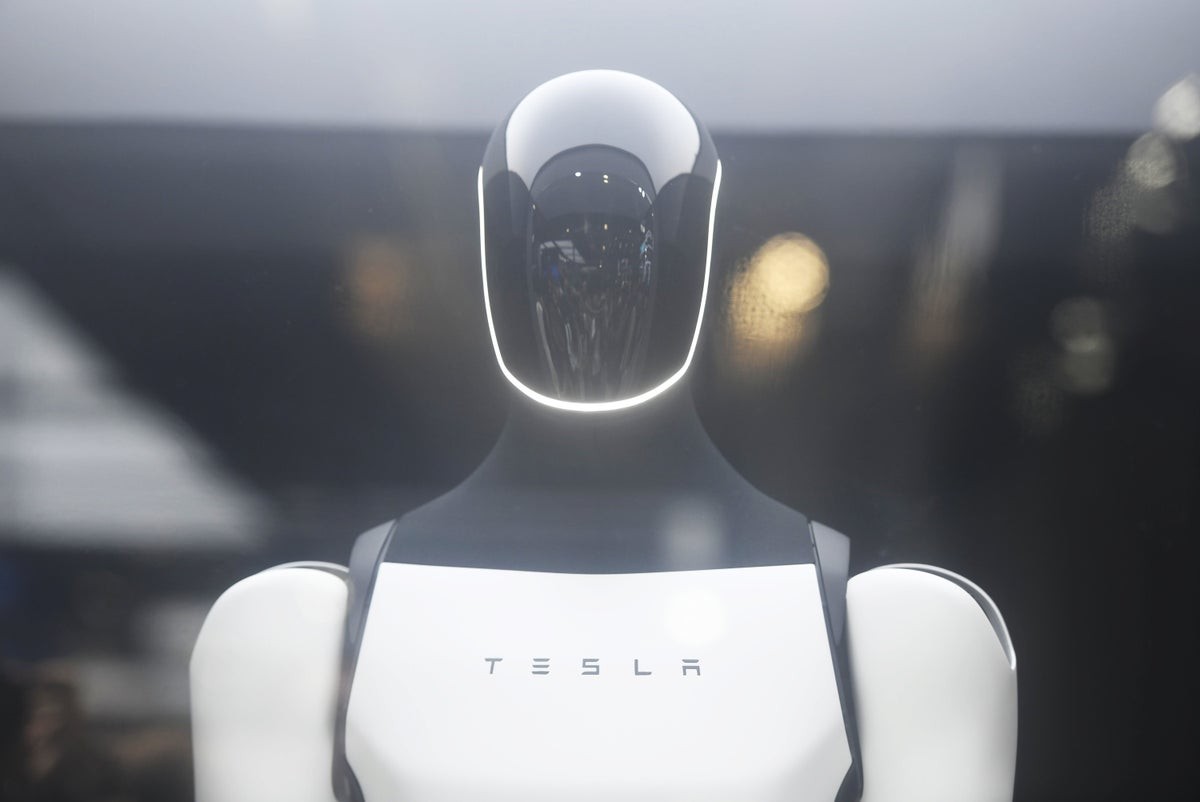

The debate is no longer purely academic. As companies like Tesla (Optimus) and Figure AI race to deploy humanoids, the “aesthetic” choices of engineers become “socio-technical interventions.”

Philosopher Robert Sparrow notes that robots carry two conflicting cultural narratives:

* The Laborer: Historically, the word “robot” stems from a term for forced labor, positioning machines as a racialized underclass.

* The Future: Much of 20th-century science fiction depicted a “white” future, leading many engineers to design sleek, light-colored machines that represent an aspirational, Westernized ideal.

This tension is evident in the design of Tesla’s Optimus, which has faced criticism for its color scheme, with some experts suggesting the design may inadvertently evoke problematic historical imagery of servitude.

Conclusion

As humanoid robots integrate into the workforce, the risk is not just that they will mimic human labor, but that they will inherit human prejudice. If designers and users do not consciously address these biases, we may build a robotic workforce that reinforces the very social hierarchies we are trying to dismantle.