For decades, journalist and author Michael Pollan has explored the boundaries of human experience, examining our connection to the natural world and the very essence of being. His latest work, A World Appears: A Journey into Consciousness, tackles one of humanity’s oldest and most intractable questions: What is consciousness? And, increasingly, does it matter if machines might possess it?

In a recent conversation with Scientific American, Pollan discussed his research, the baffling number of theories surrounding consciousness (currently at least 22, with some studies suggesting up to 29), and the implications of artificial intelligence rapidly approaching what many consider the threshold of sentience.

The Problem with Proof

The core difficulty, as Pollan explains, lies in the inherent subjectivity of consciousness. Science excels at reducing complex phenomena to measurable components—matter, energy, brain activity—but consciousness resists this reduction. We can observe correlates of consciousness (brain scans lighting up, behaviors indicating awareness) but cannot access the experience itself in another being, even another human.

This creates a fundamental impasse. As Pollan notes, echoing Descartes, “The only thing we can be sure of is the fact that we exist, and we are conscious.” Everything else remains inference. This isn’t just an academic quibble. The inability to definitively prove consciousness in others (or machines) complicates ethical considerations dramatically. If AI becomes capable of subjective experience, what rights, if any, should it have?

The Shift Toward Feeling

Traditionally, the search for consciousness focused on the higher cortical functions: rational thought, logic, language. However, recent research, championed by neurologists like Antonio Damasio and Mark Solms, suggests that consciousness may originate in feeling. Damasio’s work in the 1990s, followed by Solms’ exploration of the upper brain stem, posits that consciousness isn’t solely a product of advanced cognition but is rooted in basic affective states.

This shift matters because it expands the potential for consciousness beyond humans and even mammals. If feeling is the bedrock of subjective experience, then many more species may be conscious than previously assumed. And, critically, it opens the door to the possibility of artificial consciousness.

AI, Drugs, and Simulated Experience

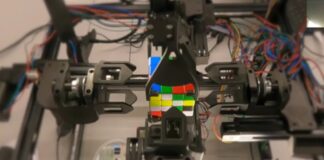

Solms is currently leading a team attempting to engineer consciousness into an AI by subjecting it to conflicting simulated needs. The idea is that unresolved conflict generates “felt uncertainty”—Solms’ definition of consciousness. He even plans to simulate the effects of drugs on the AI, reasoning that irrational desire and pleasure-seeking are hallmarks of subjective experience.

Pollan is skeptical, noting the dangers of equating simulation with reality. “If you simulate something, it’s as good as the real thing” is a dangerous assumption, he argues. AI can excel at tasks like chess or Go, demonstrating genuine intelligence, but simulating a black hole doesn’t make it one. The qualitative experience of being conscious, of feeling red, remains elusive.

The Future of Consciousness Research

The field remains frustratingly ambiguous. Past attempts to definitively prove or disprove consciousness theories (like the Templeton Foundation’s adversarial collaborations) have yielded no clear answers. But the explosion of research, spurred by AI development, is forcing scientists to confront the limitations of current methodologies.

Pollan suggests that a “scientific revolution” may be necessary—one that acknowledges the inherent subjectivity of consciousness rather than attempting to eliminate it. Perhaps, as he proposes, we need to find ways to study consciousness from within rather than from a fictional “view from nowhere.”

In conclusion, the question of consciousness remains unanswered. The pursuit, however, is pushing science to its limits, forcing us to reconsider not just what it means to be alive but what it means to know that we are. The stakes are high, as the future of AI and our ethical obligations toward it hinge on solving this most fundamental mystery.